Innovation through

open collaboration

Open-source research is part of our DNA. Here we share what we're building across language models, evaluation, and AI agents.

Latest research artifacts

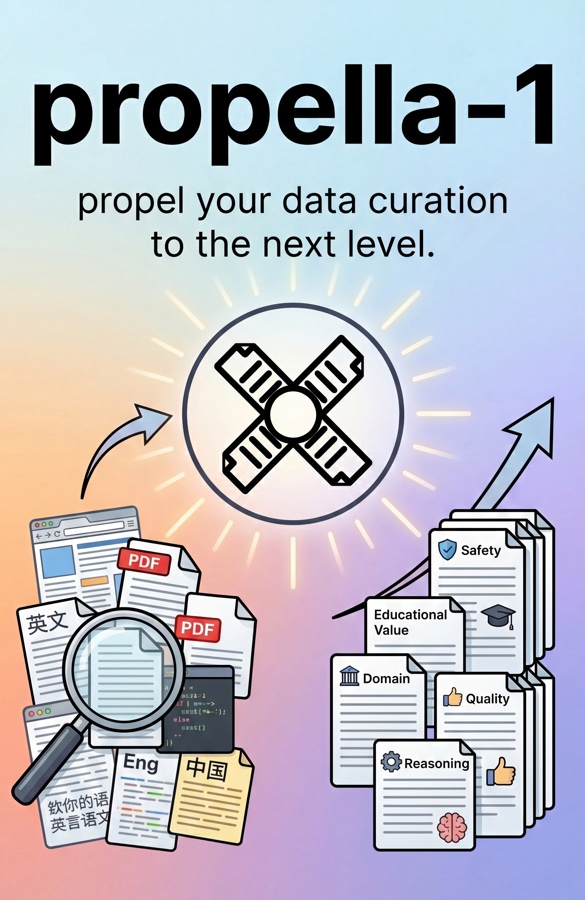

propella-1

A family of small multilingual LLMs for annotating text documents across six categories: core content, classification, quality and value, audience and purpose, safety and compliance, and geographic relevance. These annotations help filter, select, and curate LLM training data at scale. The models outperform much larger general-purpose baselines.

sui-1: Summarization with Unique Identifiers

A 24B-parameter LLM for abstractive summarization with inline citations. Every claim is traceable to its source sentence. It supports documents with more than 2M tokens and outperforms all tested open-weight baselines, including models with 3x more parameters.

base-eval

Curated lm-evaluation-harness task configurations for evaluating English and German base models. Every task is validated against reference models, enabling benchmark suites for early-stage pretraining and in-loop evaluation.

inference-hive

Distributed LLM inference at scale for SLURM clusters. Configure cluster, server, and data settings, then scale across thousands of GPUs with near-linear throughput.

Publicly funded research projects

Transparent AI for Europe

ellamind is proud to be part of a consortium of 20 leading European research institutions, companies, and EuroHPC centres building a family of high-performing multilingual foundation models for commercial, industrial, and public-sector use. These transparent, compliant open-source models aim to democratize access to high-quality AI and strengthen Europe's competitiveness.

Learn more

Building Europe's AI future

As a partner in LLMs4EU, ellamind helps develop cutting-edge language models that put European languages, values, and innovation at the center. This EU-funded consortium combines expertise from across the continent to build AI technologies that genuinely serve European needs. Our open-source approach helps organizations of all sizes access and benefit from advanced AI.

Learn more

Optimized Use of Open-Source LLMs in SMEs

LLM4KMU brings together leading research institutions, companies, and innovation partners in North Rhine-Westphalia to broaden small and medium-sized enterprises' access to large language models. Through a collaborative experimentation platform, shared know-how, and prototypical use cases, the project helps SMEs apply open-source AI to real products and services.

Learn more

Sovereign & Open Models for Europe

ellamind is part of SOOFI (Sovereign Open Source Foundation Models), a German consortium of research institutions and start-ups developing open, sovereign AI language models as a European alternative to existing systems. SOOFI aims to build a powerful open-source foundation model aligned with European values and regulatory requirements from day one.

We actively collaborate with research communities and partners, such as LAION, Open-Sci, ontocord.ai, EuroLLM, Hessian.AI, AlignmentLab AI, DFKI, and others to pool resources, share insights & knowledge, and advance the collective understanding of LLMs.

Built on a foundation of

open-source AI research

ellamind grew out of the open-source AI community. Our team trained and released some of the earliest and most widely used open German large language models, downloaded more than 1,000,000 times on Hugging Face. That hands-on experience in training, evaluating, and applying LLMs across languages, domains, and use cases is the foundation everything at ellamind is built on.

Open German LLMs

We pioneered open-weight German language models at a time when high-quality non-English LLMs were scarce, helping establish a vibrant ecosystem for German AI.

From research to product

Our deep expertise in model training and evaluation directly informs how we build our products. We understand LLMs & agents from the inside out, not just as API consumers.

Pre-training & fine-tuning expertise

We have deep, hands-on experience in continuous pre-training and fine-tuning of language models, allowing us to optimize and adapt models for specific tasks and languages.

Evaluation & open datasets

We develop advanced evaluation techniques and publish open-source datasets and benchmarks that the community uses to improve existing and new models.

Community-driven

Through DiscoResearch and collaborations with Hessian.AI, LAION, and DFKI, we have always believed that openness and collaboration drive the best AI outcomes.

Frequently asked questions

Find answers to frequently asked questions about our research. If you still can't find your question here, feel free to contact us.