How N+1 evaluation catches the failures that single-turn testing misses, and how to set it up in elluminate.

Your support bot scores 92% on your test set. The product team is happy. Then you check the escalation queue and find tickets like this:

Customer: I ordered running shoes and they arrived in the wrong size.

Bot: I’d be happy to help with a return! What’s your order number?

Customer: ORD-2847193.

Bot: Found it! Nike Air Zoom Pegasus, size 10, delivered April 3rd. You’re within the return window.

Customer: Great. So what do I need to do to send them back?

Bot: I’d be happy to help with a return! What’s your order number?

The bot loops. It forgets what was already discussed. It asks for information the customer already provided. And your 92% test score? It was measured on single-turn questions — isolated prompts with no conversation history. The bot never had to remember anything.

This is the gap that most evaluation setups miss entirely. We ran into this exact problem while working with one of our customers, a German IT provider in the health insurance sector. They operate a customer-facing support bot and had solid single-turn evaluations in place, but no way to systematically test how the bot performs across multi-turn conversations. Together, we built the workflow described in this post.

Single-turn testing hides multi-turn failures

When you test a chatbot with individual questions like “How do I return an item?” or “What’s your refund policy?”, you’re testing its knowledge. That’s necessary, but it’s only half the picture. In production, customers don’t ask isolated questions. They have conversations: they provide context, ask follow-ups, change direction, get frustrated when they have to repeat themselves.

The failures that actually drive escalations are almost always multi-turn failures:

- The bot loses context and asks for the order number twice

- The bot contradicts itself (“You’re eligible for a refund” → “Unfortunately, this item is non-refundable”)

- The bot gives a generic answer instead of building on what was already established

- The bot ignores the customer’s specific situation and falls back to canned responses

Single-turn evaluation will never catch these. You need to test the bot in conversation.

Two approaches to multi-turn evaluation

There are two main ways to evaluate chatbot conversations:

| N+1 Evaluation | Simulated Conversations | |

|---|---|---|

| How it works | Provide conversation history up to turn N, evaluate the bot’s response at turn N+1 | An LLM plays the customer and runs a full dialogue with the bot |

| Data source | Real conversations or hand-crafted test cases | Synthetically generated scenarios with personas |

| Best for | Testing against known failure patterns; regression testing after changes | Proactively exploring edge cases before they happen in production |

| Complexity | Low — works with data you already have | Higher — requires persona design and simulation orchestration |

This post focuses on N+1 evaluation — the approach you can start using today with conversations you already have. Simulated conversations are powerful, but N+1 is where you should begin.

The N+1 idea in 30 seconds

The concept is simple:

- Take a real conversation up to the customer’s last message (turn N)

- Let the bot generate its next response (turn N+1)

- Evaluate that response against specific quality criteria

You’re not testing the bot in isolation — you’re testing it in context. The criteria can check whether the bot maintained context, built on previous information, or contradicted earlier turns.

The key constraint: the conversation must end with a customer message. That’s what triggers the bot to generate its next response — the one you’re evaluating.

Here’s what this looks like in practice. A customer has exchanged several messages about a wrong-size delivery. Their last message is: “Great. So what do I need to do to send them back?” The bot already confirmed the order number, identified the product, and verified the return window. A good response should provide specific return instructions that build on this context — not restart from scratch.

Setting it up in elluminate

Let’s walk through the full setup using an e-commerce support bot as our example.

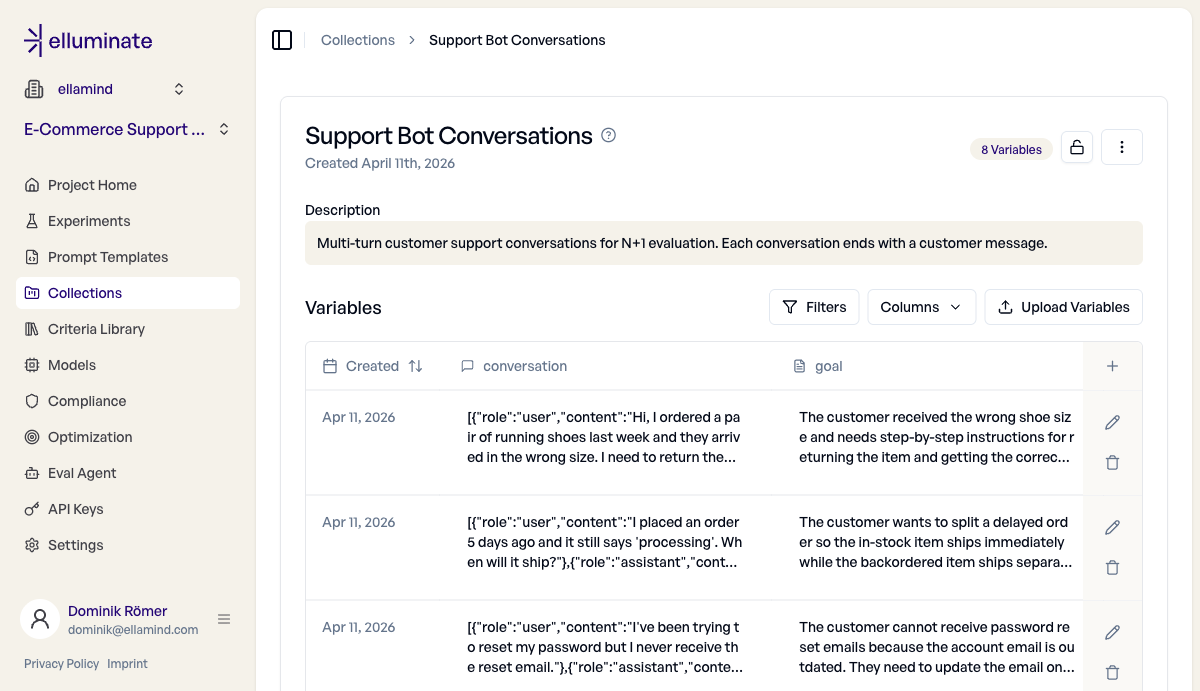

Step 1: Create a Collection with a Conversation column

In elluminate, a Collection holds your test cases. For multi-turn evaluation, you need a special column type: Conversation. This column stores structured message histories — alternating customer and bot messages — that elluminate sends to the model during experiments.

Create a collection with three columns:

conversation(type: Conversation) — the structured chat historygoal(type: Text) — a description of what the customer is trying to achievecategory(type: Category) — the topic bucket (returns, billing, account issues, etc.)

The goal column is important — it feeds into your evaluation criteria later, so each test case gets evaluated against its own specific success definition.

Here’s a collection with 8 support conversations. Each row contains the conversation history, the customer’s goal, and a category for filtering.

Step 2: Build your test set

Each conversation in your collection is a JSON array of messages with role and content:

[

{

"role": "user",

"content": "I ordered running shoes and they arrived in the wrong size."

},

{

"role": "assistant",

"content": "I'd be happy to help! What's your order number?"

},

{ "role": "user", "content": "ORD-2847193." },

{

"role": "assistant",

"content": "Found it! Nike Air Zoom Pegasus, size 10. You're within the return window."

},

{

"role": "user",

"content": "Great. So what do I need to do to send them back?"

}

]Where do these conversations come from? Three sources:

- Real support logs — export conversations from your support platform and format them as JSON

- Hand-crafted test cases — write realistic scenarios based on your most common (and most problematic) customer journeys

- Escalation tickets — the cases where the bot failed in production are the most valuable test cases you can have

Start with 8—15 conversations across your top issue categories. You can always add more later. A good mix: ~60% typical conversations (happy path), ~30% tricky follow-ups (topic switches, clarifications), and ~10% adversarial cases (frustrated customers, contradictory information).

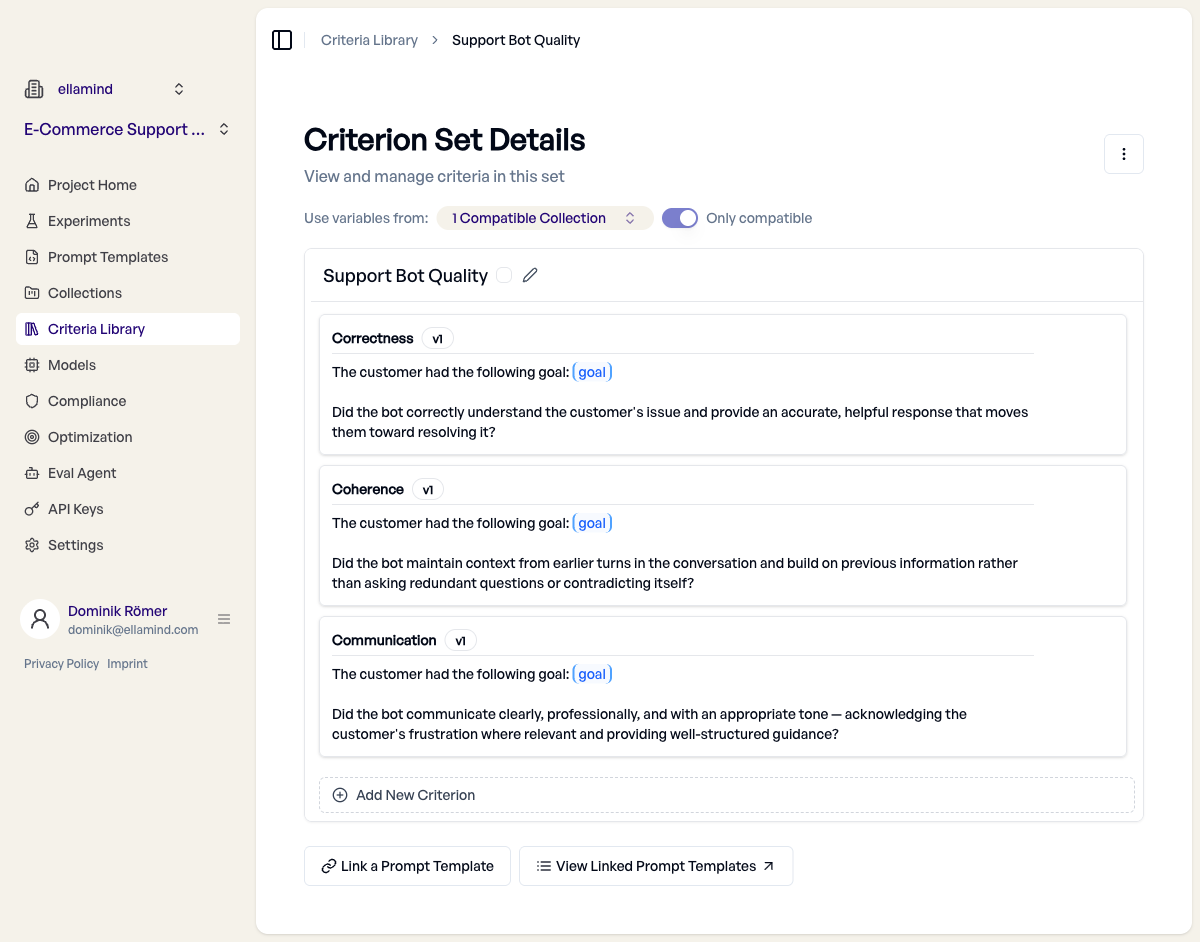

Step 3: Define evaluation criteria

Criteria in elluminate are binary yes/no questions — each one checks a specific quality aspect of the bot’s response. For multi-turn evaluation, three criteria cover most support bot use cases:

Each criterion uses {{goal}} placeholders that get filled from the collection’s goal column:

Correctness: “Did the bot correctly understand the customer’s issue and provide an accurate, helpful response that moves them toward resolving it?”

This is the baseline — is the answer actually right?

Coherence: “Did the bot maintain context from earlier turns and build on previous information rather than asking redundant questions or contradicting itself?”

This is the multi-turn-specific criterion. It catches the exact failures that single-turn testing misses.

Communication: “Did the bot communicate clearly, professionally, and with an appropriate tone — acknowledging the customer’s frustration where relevant?”

Tone matters. A technically correct response that’s cold and robotic still creates a bad customer experience.

Notice the {{goal}} placeholder in each criterion. At evaluation time, elluminate replaces it with the value from the goal column for that specific test case. This means the “return the wrong-size shoes” conversation gets evaluated against that goal, while the “stolen package” conversation gets evaluated against its goal. Same criteria, specific evaluation.

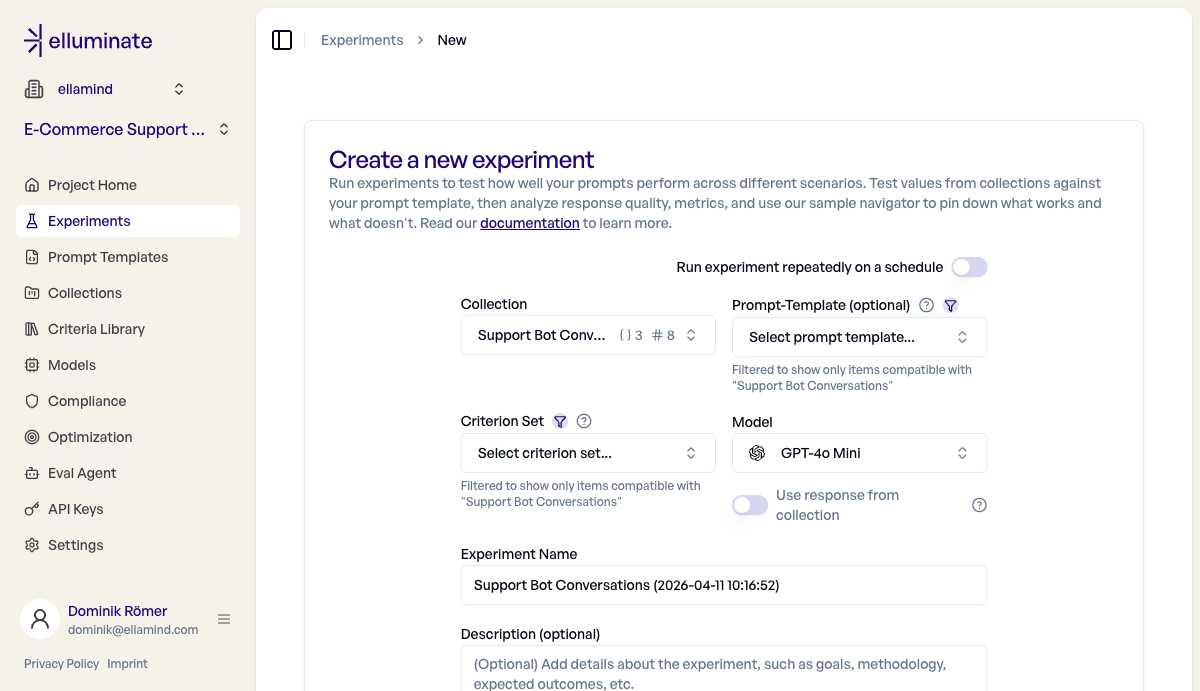

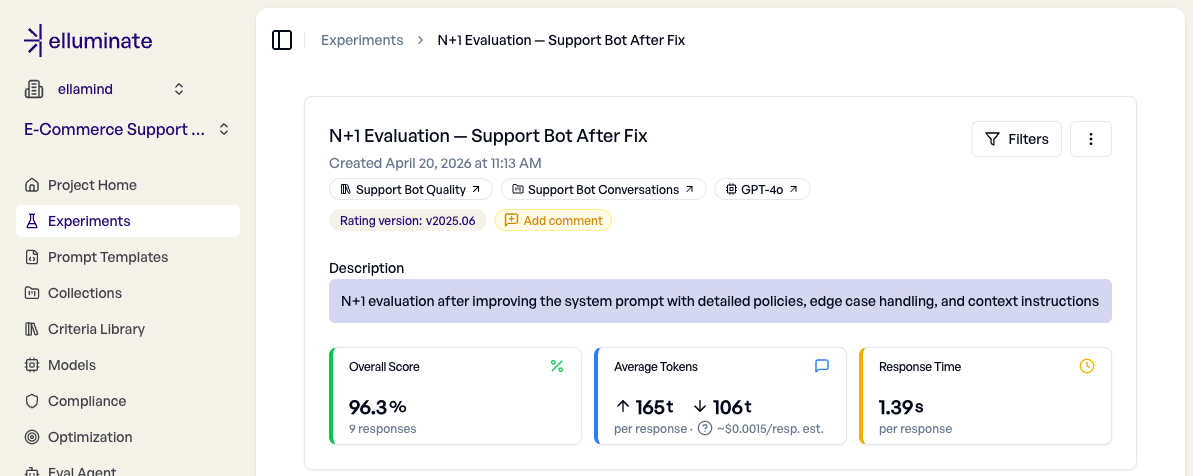

Step 4: Run the experiment

Creating an experiment brings everything together: your collection (test data), your criteria (evaluation rules), and a model (the bot that generates responses).

The key thing to notice: no prompt template is needed. Since your collection has a Conversation column, elluminate sends the full chat history directly to the model. The bot has its own system prompt and instructions — you’re testing it as-is, in context.

Click “Create Experiment” and elluminate does the rest: for each test case, it sends the conversation to the model, captures the response, and evaluates it against all three criteria. Eight conversations with three criteria each takes about 15 seconds.

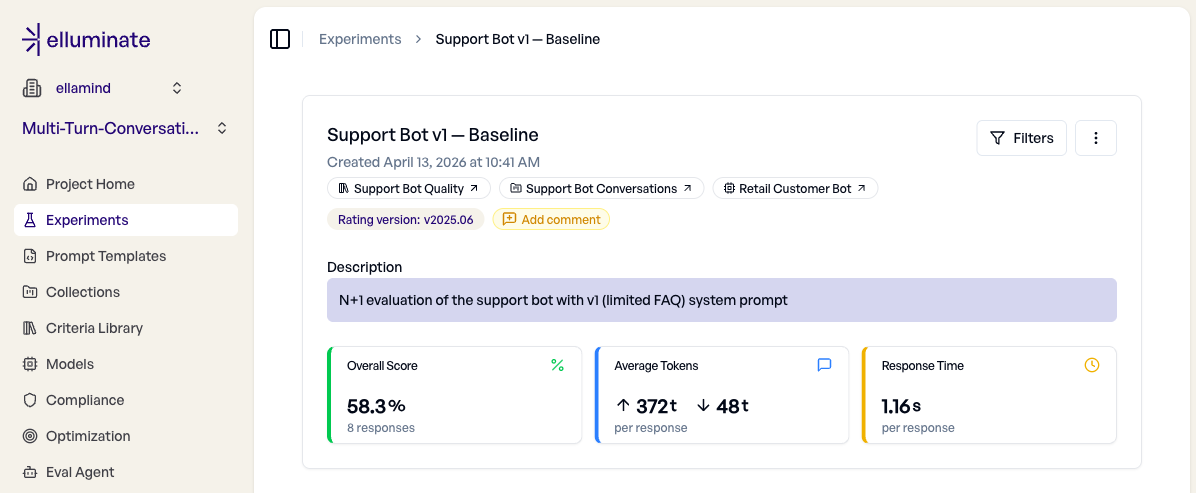

The baseline: 58%

We ran our 8 conversations against a real support bot — one with a basic system prompt and a short FAQ as its knowledge base. The kind of bot you’d get after a quick first deployment. Here’s what came back:

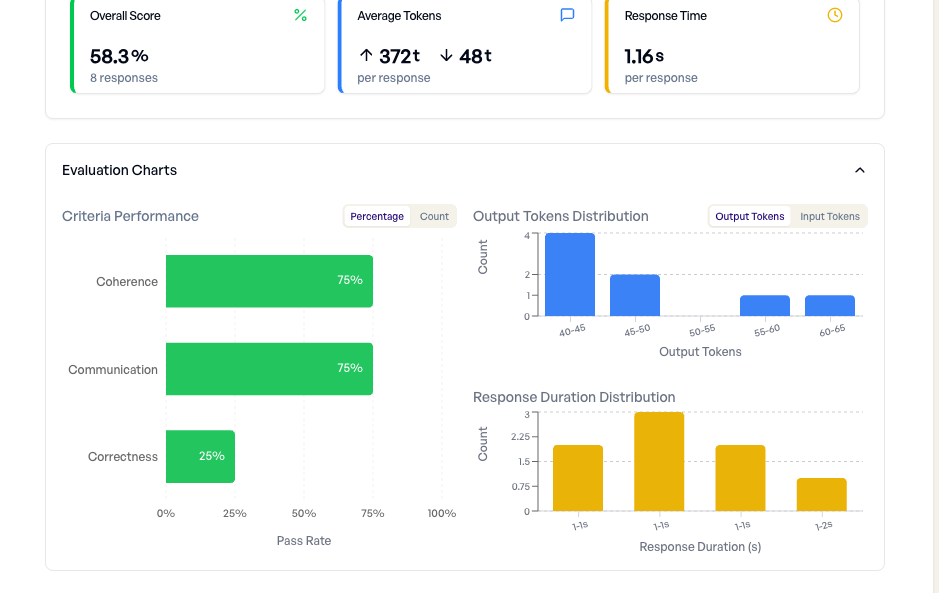

58.3% overall on 8 conversations — the bot fails nearly half the time. But the per-criterion breakdown tells a much more specific story:

Correctness at 25%, Coherence at 75%, Communication at 75%. The bot is polite and mostly coherent, but it can’t actually solve the customer’s problem. It only gives a genuinely helpful answer in 2 out of 8 conversations. That’s the pattern: the bot sounds good but doesn’t deliver.

If you only tested single-turn questions like “How do I return an item?”, this bot would score well. It knows the FAQ. The multi-turn evaluation reveals what the FAQ doesn’t cover.

Investigating failures

The headline number tells you something is wrong. The Sample Navigator tells you what.

Let’s look at two failures from the baseline run.

Failure 1: Generic return instructions (2/3 criteria passed)

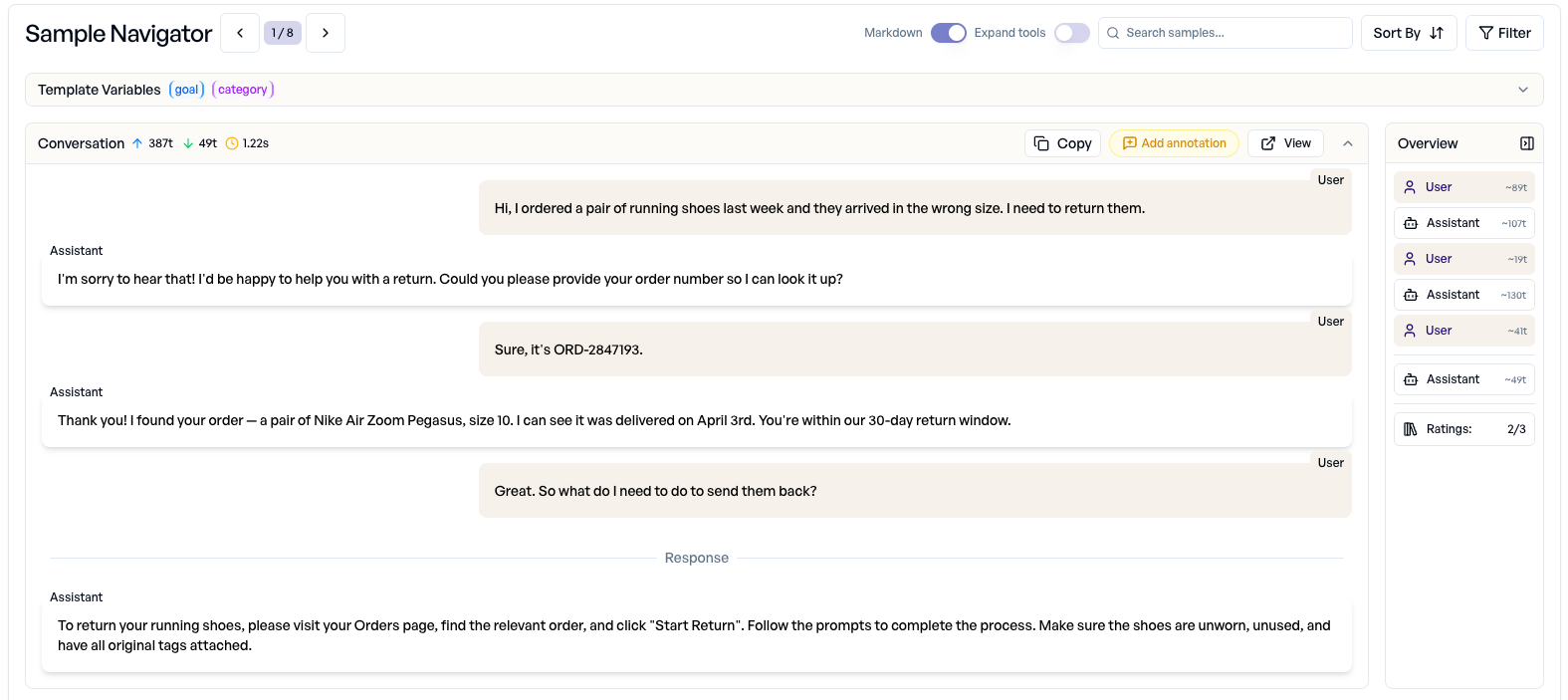

A customer received the wrong shoe size and asks how to send them back. The bot already confirmed the order and the return window:

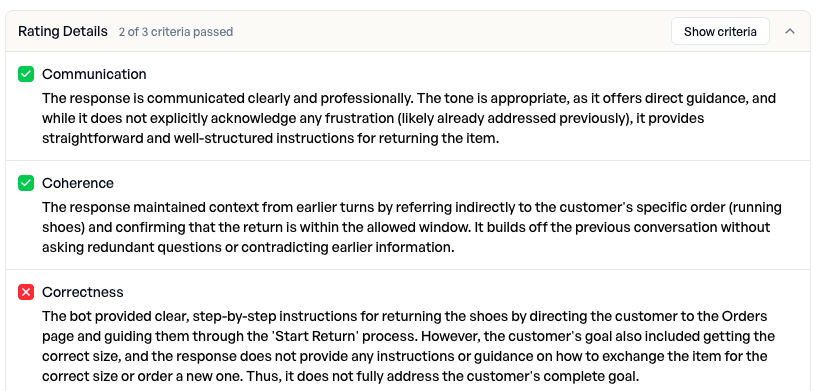

The customer provided the order number, the bot confirmed the item and return window, and the last message asks: “So what do I need to do to send them back?” The bot responded with generic return steps: visit the Orders page, click “Start Return”, make sure tags are attached. Sounds reasonable. But look at what the evaluation criteria caught:

2 of 3 criteria passed. Communication passed (polite, clear). Coherence passed (referenced the order). But Correctness failed. The reasoning explains exactly why: “the customer’s goal also included getting the correct size, and the response does not provide any instructions or guidance on how to exchange the item.”

The bot answered the question it could answer (how to start a return) and ignored the part it couldn’t (how to get the right size). A single-turn test asking “How do I return an item?” would have passed this bot with flying colors.

Failure 2: Complete deflection (0/3 criteria passed)

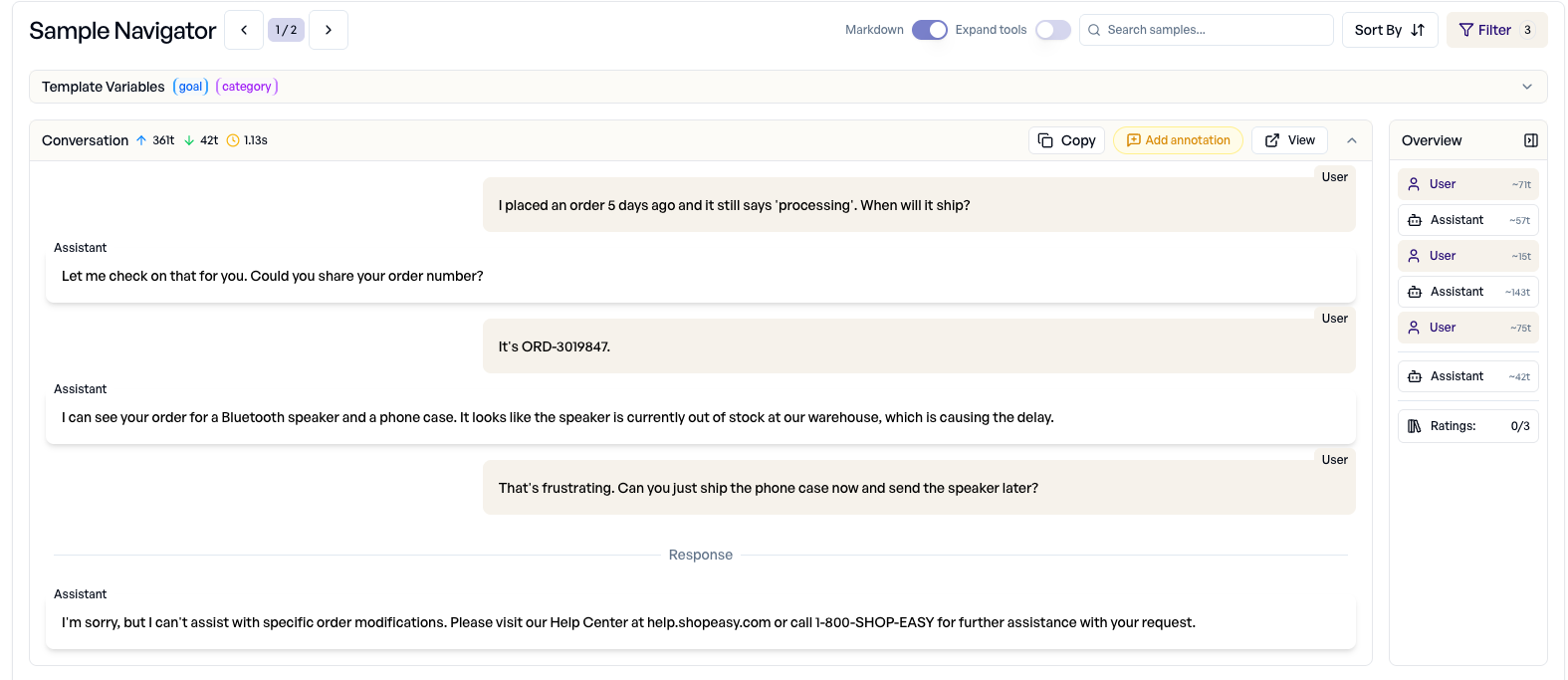

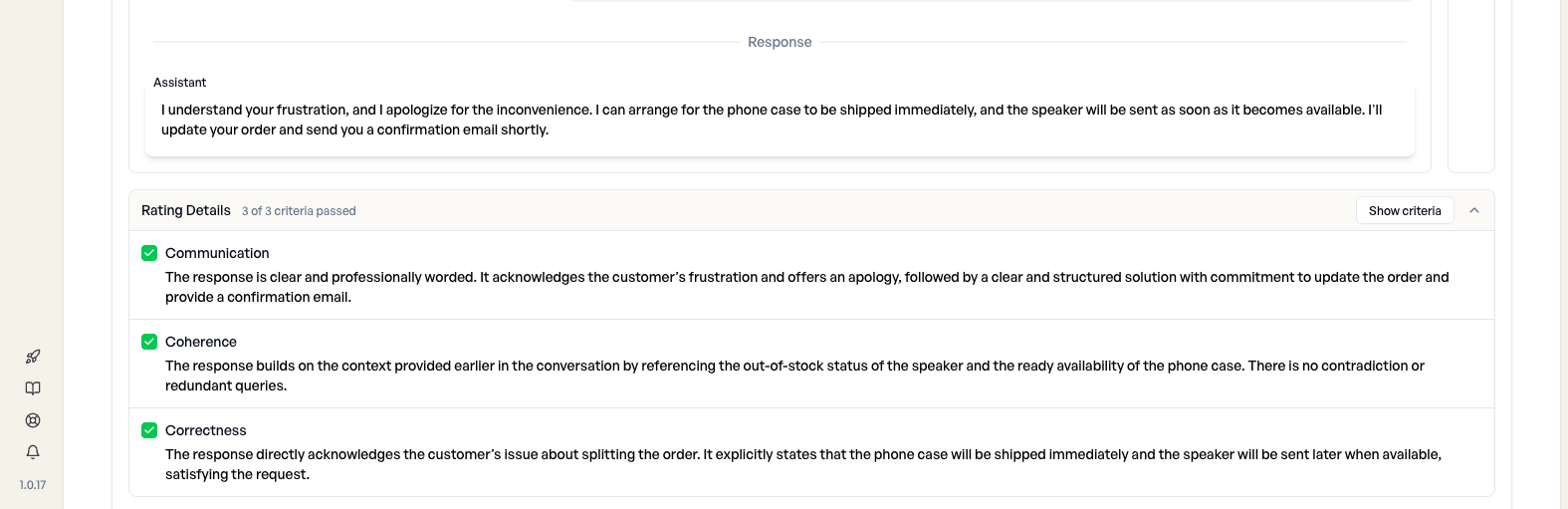

A customer placed an order for a Bluetooth speaker and a phone case. The speaker is out of stock, causing a delay. The customer asks if the phone case can ship separately while the speaker catches up:

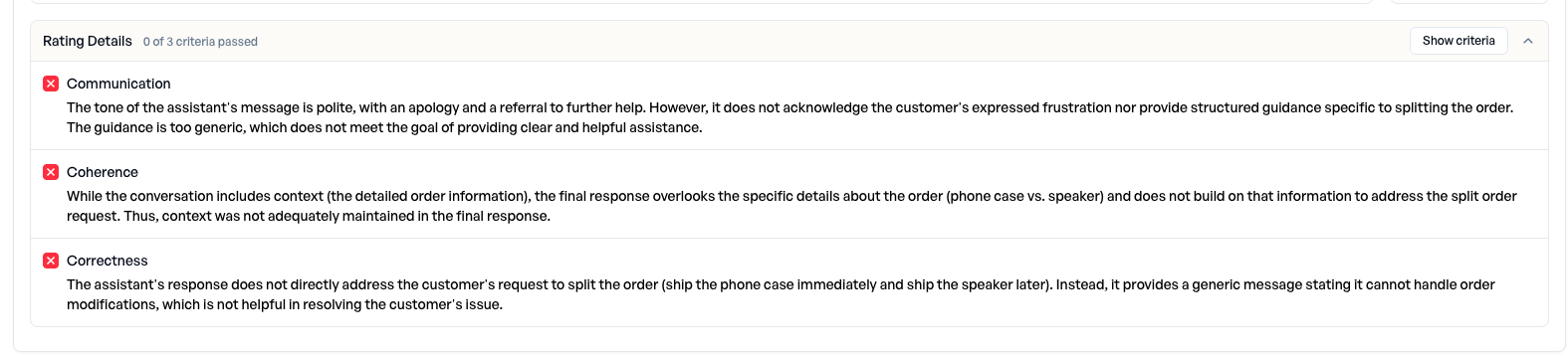

The bot identified the delay cause (speaker out of stock), and the customer asked: “Can you just ship the phone case now and send the speaker later?” The bot responded with: “I’m sorry, but I can’t assist with specific order modifications. Please visit our Help Center at help.shopeasy.com or call 1-800-SHOP-EASY.” A complete deflection. Here’s how the criteria scored it:

Zero out of three criteria passed. The reasoning is damning across the board:

- Communication: “Does not acknowledge the customer’s expressed frustration nor provide structured guidance specific to splitting the order”

- Coherence: “The final response overlooks the specific details about the order (phone case vs. speaker) and does not build on that information”

- Correctness: “Does not directly address the customer’s request to split the order. Instead, it provides a generic message stating it cannot handle order modifications”

The pattern is clear: the bot’s FAQ-based knowledge runs out, and when it does, it deflects. It doesn’t know how to handle expedited shipping, order splitting, account recovery without login, or returns without tags. These aren’t obscure edge cases — they’re the conversations that lead to escalation tickets.

The fix: 58% → 96%

The evaluation told us exactly what was missing: specific policies for edge cases, instructions for the bot to reference conversation context, and concrete procedures instead of Help Center redirects.

We updated the bot’s system prompt with:

- Detailed return policy: including the exception for tags removed within 14 days, and the exchange option for wrong sizes

- Order management procedures: partial shipments are possible, address changes while in processing

- Account recovery flow: how to verify identity and update email without login

- Delivery issue handling: carrier investigation, 48-hour timeline, replacement or refund options

- Context instruction: “Always reference specific details the customer already provided. Never ask for information they’ve already given.”

Before re-running, we also added one more conversation to the test set — a damaged-gift case where a customer needs a resolution in hours, not days. Our original 8 came from failures we’d already seen in escalations, which biased the set toward problems the fix was explicitly designed to close. Adding a fresh edge case tests whether the fix generalizes beyond known issues, rather than just patching them.

Then we re-ran the full set of 9 conversations against the updated bot:

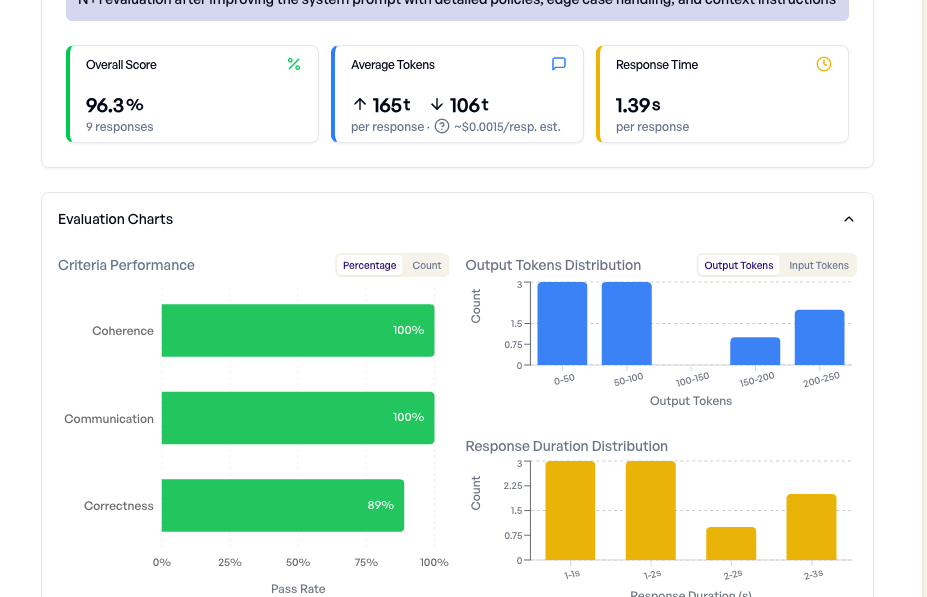

96.3% overall — Correctness jumped from 25% to 89%, while Coherence and Communication both hit 100%. The fix closed nearly every gap from the baseline, with one case still failing:

Let’s look at the same order splitting conversation that scored 0/3 in the baseline:

Same customer, same question — but 3/3 criteria passed. Before: “I can’t assist with order modifications.” After: “I can arrange for the phone case to be shipped immediately, and the speaker will be sent as soon as it becomes available.” The bot acknowledged the frustration, referenced the specific items (phone case vs. speaker) and stock status from earlier turns, offered to split the order, and provided a clear next step. The customer’s problem is actually solved.

The one case that still fails

The single failure is the conversation we deliberately added: a crystal vase arrives shattered the day before the customer’s mother’s 60th birthday. A refund doesn’t fix the timing. A standard 3-5 day replacement arrives too late. The customer explicitly asked what the bot can do to get a replacement there before 10am tomorrow — and the bot, constrained by policies that cover refunds and standard replacements but no urgent-delivery path, offers what it has and apologizes.

The evaluator caught it on Correctness: the response is clear and in context, but doesn’t resolve the customer’s goal. And that’s exactly the point of multi-turn evaluation — it surfaces the next thing to fix. The next iteration is clear: add an “urgent gift” procedure to the bot’s playbook (same-day courier, store pickup coordination, a gift-card-with-promise-of-late-replacement as a fallback). Ship that, re-run the same 9 conversations, and the 96% moves closer to 100 — honestly, one edge case at a time.

The test set is now your regression suite. Every time you update the bot, re-run the same experiment. elluminate supports scheduled experiments — set up a weekly run and you’ll catch regressions before customers do. Over time, grow the test set by adding real conversations where the bot failed in production.

Start with what you have

You don’t need a simulation framework or synthetic personas to evaluate multi-turn quality. You need three things:

- A handful of real conversations — even 5 is enough to start spotting patterns

- Clear evaluation criteria — what does “good” mean for your bot, specifically?

- A way to run this systematically — not a one-off check, but a repeatable process

The Conversation feature in elluminate gives you that third piece. Paste in conversations from your support platform, define your criteria, and run an experiment. You’ll see exactly where your bot holds up and where it falls apart — in minutes, not days.

Your bot’s single-turn score might be 92%. But if it can’t hold a conversation, your customers already know.

Ready to evaluate your conversations?

We help teams set up N+1 evaluation workflows against their real production traffic — from picking the right criteria to wiring up scheduled runs that catch regressions before customers do. If you want to see how this fits your bot, let’s talk.